TL;DR:

When we built a simple school picker, we uncovered critical lessons for improving SaaS onboarding UX. Speed, smart prediction, and clean data turned out to be essential for building trust through dynamic forms and search experiences. In this article, we share real-world insights and practical advice that product leaders can apply to streamline onboarding, enhance performance, and drive stronger user engagement in SaaS and health tech products.

When we built a simple school picker, we uncovered critical lessons for improving SaaS onboarding UX. Speed, smart prediction, and clean data turned out to be essential for building trust through dynamic forms and search experiences. In this article, we share real-world insights and practical advice that product leaders can apply to streamline onboarding, enhance performance, and drive stronger user engagement in SaaS and health tech products.

When we built a simple school picker for a nonprofit client, we didn’t realize we were uncovering UX lessons that would apply to SaaS products across industries.

In SaaS platforms, especially those handling complex onboarding, search, or dynamic forms, the difference between a user-friendly experience and a frustrating one often comes down to the small moments: how fast a form reacts, how smart the suggestions are, how much friction the user feels.

This is the story of how a school picker tool taught us the critical importance of UX performance — and what product leaders can take away when designing high-stakes user flows.

These lessons are essential for improving SaaS onboarding UX, where dynamic forms and search fields often make or break first impressions.

Why fast, intuitive search is critical in SaaS and health tech

Today’s users expect immediacy. Whether it’s selecting an organization, inputting an address, or searching for a location, any delay is perceived as incompetence. For SaaS and health tech products where first impressions matter — especially during onboarding — friction in dynamic fields can kill trust before it’s even built.

A simple autocomplete field may seem minor, but it becomes a critical point of user perception, especially when improving SaaS onboarding UX. A sluggish search field suggests that the entire platform may be slow, unreliable, or poorly built.

Speed and intuitiveness in form interactions aren’t just UX nice-to-haves. They’re conversion drivers.

A real-world challenge: Building a school picker

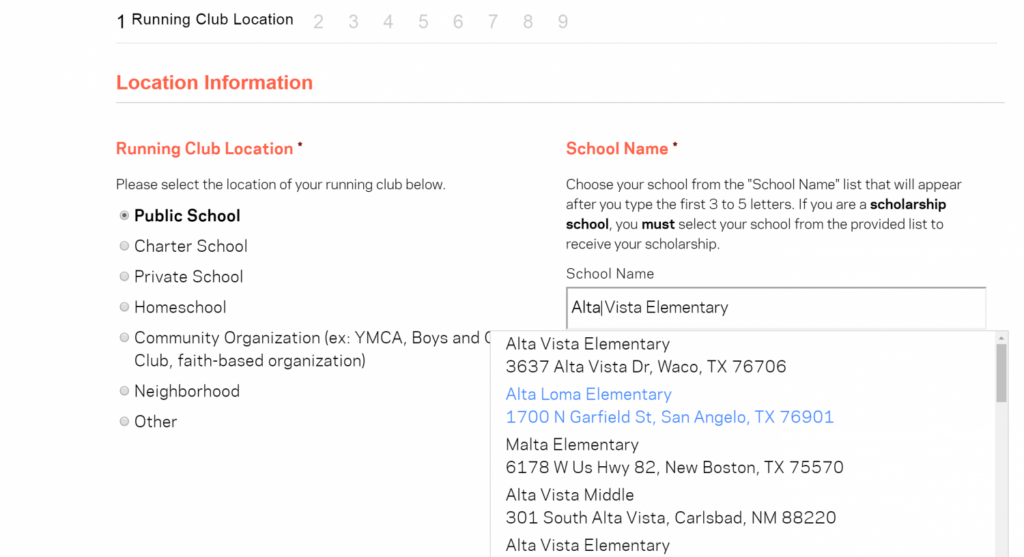

We were tasked with creating a “school picker” tool to allow parents and kids to find their specific school from a nationwide database and join a fitness program. The first solution seemed straightforward: load all the school names into a drop-down menu or search field.

Reality hit fast.

- Data volume: Over 130,000 schools.

- Performance: Initial tests with basic search functions lagged — badly.

- User expectations: Parents expected the tool to “just work” like Google Search.

Our first attempts resulted in delays that broke the experience. Every keystroke felt sluggish. Users got frustrated. Some abandoned the search altogether.

We had to rethink and fast.

Key lessons for SaaS product leaders

1. Autocomplete speed is non-negotiable

Autocomplete must feel instant. Even a half-second delay can make users doubt the system.

We moved from server-side search to a local index approach — preloading lightweight indexes based on user location (more on that below) and searching locally. No server request for every keystroke. The experience became lightning-fast.

Takeaway for SaaS: For onboarding flows or forms that depend on dynamic search, invest in local search indexes or smart caching strategies to maintain sub-200ms interactions.

2. Intelligent guessing must be invisible

Users shouldn’t have to tell your system everything. It should predict smartly.

We implemented GeoIP lookup to pre-filter school options based on a user’s approximate location. Instead of searching all 130,000 schools, a user would start with just the schools nearest them.

Takeaway for SaaS: Location data, account metadata, or previous session behavior can be used to predict and narrow user inputs, reducing cognitive load and perceived effort.

3. Data quality impacts UX trust

Our school database needed cleanup. Some schools had outdated names, duplicates, or slight misspellings.

Even with great UI, bad data undermines trust. If users don’t find what they expect quickly, they assume the platform is broken.

Takeaway for SaaS: Data hygiene isn’t just a backend concern. It directly impacts user perception. Poor search results erode user trust faster than almost any other onboarding issue.

Practical advice: Applying these lessons to SaaS forms and flows

Not every product needs a school picker, but many SaaS and health tech platforms rely on complex forms, search fields, or dynamic onboarding experiences that can make or break user trust.

If your product includes any of the following, you’re at risk of hidden UX friction:

- Searchable lists (organizations, locations, clients)

- Dynamic registration flows

- Custom onboarding pathways

In these cases, performance isn’t just about speed. It’s about delivering a seamless, trustworthy experience that reduces cognitive load and boosts conversion.

To design for performance-first UX, here’s a checklist for product leaders:

- Audit form fields for performance — simulate slow connections and different device types.

- Use local indexing or predictive narrowing wherever possible to speed up search results.

- Implement invisible smart defaults (e.g., location pre-filters) to ease user effort.

- Regularly clean and validate searchable datasets to maintain trust.

- Benchmark your dynamic interactions against user expectations. Google-level speed is the baseline.

- Prioritize improving SaaS onboarding UX by optimizing search and dynamic form responsiveness.

Conclusion

A school picker sounds simple. But in the small, often overlooked interactions, the critical lessons emerge — lessons that apply directly to how SaaS products convert, onboard, and retain users.

Building high-performance, user-friendly search flows isn’t optional anymore. It’s fundamental to improving SaaS onboarding UX and setting the stage for long-term customer retention.

Improving SaaS onboarding UX starts with the right strategy.

Let’s connect and build a stronger user experience together. Talk to our team