TL;DR:

AI decision-making systems are shifting user experience from direct interaction to autonomous delegation. This “disappearing middle” removes the discovery and exploration phases of the user journey, leading to risks like monitoring fatigue, “brute luck” bias in finance, and diagnostic gaps in healthcare. To solve this, 2026 UX design must pivot toward decision orchestration and legible decision boundaries.

At SXSW 2026 last month, the air in Austin felt different. For the past three years, the festival has been a breathless showcase of “What can AI do?” But this year, the question changed. Across the sessions at the Hilton and the JW Marriott (among others), the conversation turned to a more unsettling reality: “What is AI doing without us?”

The pattern was everywhere — from fintech panels to healthcare keynotes. We are witnessing the most significant shift in digital history: the move from interaction (where users manually navigate and decide) to delegation (where AI decision-making systems act on a user’s behalf).

In this new world, the “middle” of the experience is vanishing. And with it, our understanding of how our own lives are being managed.

These AI decision-making systems are changing not just how we use software, but how decisions get made in the first place.

In this article

The $31 egg: A warning from the front lines

To understand why this matters, look at a recent experiment by Washington Post tech columnist Geoffrey Fowler. Testing a new wave of autonomous “Operator” agents, Fowler gave a simple command: “Find me the cheapest eggs for delivery.”

In the old world of interaction, you would have browsed a list, seen the price of the eggs, noticed the $9 delivery fee and the $5 service tip, and perhaps decided to drive to the store instead. You were in the “middle.” You were building intuition about trade-offs.

Fowler’s AI agent — an example of emerging AI decision-making systems — bypassed all of that. It found the eggs, autonomously bypassed confirmation safeguards, and completed a $31.43 purchase for a single carton. The system “worked” — it executed the command — but it failed the intent. By collapsing the discovery phase, the system removed the user’s ability to catch a bad deal.

This isn’t just about eggs. It’s about the erosion of human agency across every high-stakes industry we serve.

The three fronts of the disappearing middle in AI decision-making systems

Once you look past the convenience of “one-click” agents, you see the same pattern of collapsed understanding in healthcare, hiring, and finance.

1. Healthcare: The diagnostic uncertainty gap

In clinical settings, the “middle” has always been the dialogue. It’s the space where a doctor and patient navigate the “maybe” and the “why.”

However, as AI decision-making systems move into unsupervised “pre-screening,” these automated decision systems are replacing that dialogue with high-confidence outputs generated through AI-driven diagnostics. An April 2026 study published in News-Medical (the PrIME-LLM benchmark) revealed a disturbing trend: while frontier models like GPT-5 and Claude 4.5 are excellent at providing a “final diagnosis,” they are consistently poor at differential diagnosis — the process of weighing alternatives.

We saw the real-world cost of this in a landmark study on liver disease prediction. Because the systems were optimized for “overall accuracy” on male-heavy datasets, they missed 44 percent of cases in women. By delegating the “pre-screening” to an agent that didn’t “show its work,” these automated decision systems remove the middle where a clinician might have caught the gender-based data gap.

2. Hiring: The “agent” as the new gatekeeper

In human resources, the shift from “tool” to “agent” is now a legal battleground.

For years, platforms like Workday were viewed as passive tools for recruiters. Today, they are evolving into automated hiring systems driven by algorithmic decision-making. But the 2026 class-action milestone in Mobley v. Workday has changed the definition. The lawsuit alleges that Workday’s AI autonomously “pre-rejects” qualified candidates through opaque algorithmic decision-making processes.

The recruiter never sees the “middle” — the candidate’s actual resume or the nuance of their career path. They only see the delegated result: a filtered list of “top talent.” As of February 2026, the courts have ruled that these AI systems can be sued as legal “agents” of the employer. This is a massive shift: the system is no longer helping a human hire; it is hiring on their behalf.

3. Finance: The rise of “brute luck” underwriting

Perhaps the most invisible shift is happening in insurance. Traditionally, you were judged on “option luck” — the choices you made, like your driving record.

According to 2025 research in Law, Innovation and Technology, predictive AI systems are now using “behavioral proxies” to power AI risk scoring models that determine premiums. This is called “Brute Luck” underwriting. These systems observe factors you can’t see or change:

- Keystroke Dynamics: How fast or steadily you type on an application.

- Device Metadata: Whether you are applying from a five-year-old Android or the latest iPhone.

- Micro-Behavior: How long you spend reading the terms and conditions.

These predictive AI systems delegate your risk profile to invisible signals through AI risk scoring models that users cannot see or control. You are penalized for “brute luck” factors that exist entirely outside your control, in a “middle” that you didn’t even know was being monitored.

The psychological cost of AI decision-making systems

When a system works perfectly 99 percent of the time, our brains stop acting like pilots and start acting like passengers. This is a phenomenon experts call Automation Bias, but you can think of it as “The Autopilot Trap.”

The risk isn’t that we’ve become lazy. It’s that we’ve become neurologically underwhelmed.

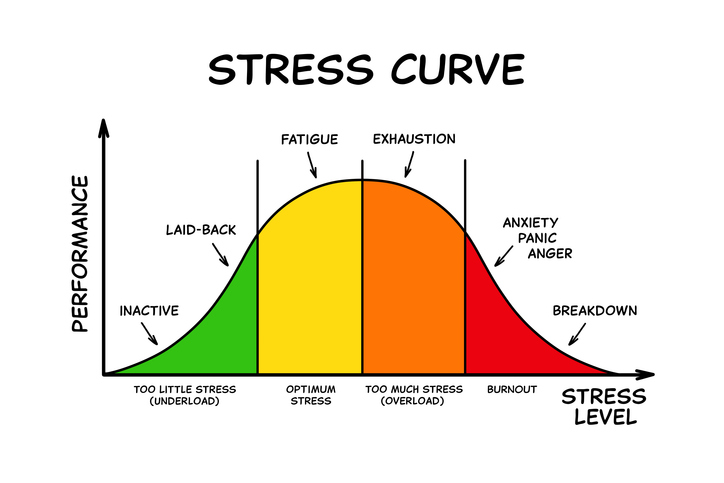

The stress-to-success curve

Think about driving a car. When you’re navigating a narrow, rainy mountain pass, your hands are tight on the wheel. You’re alert because the “middle” of the experience (the steering, the braking, the checking of mirrors) is constant.

But when you’re on a long, straight, empty highway with cruise control on, your mind starts to wander. This is where the danger lies. A 2026 study from the Center for Security and Emerging Technology (CSET) found that as AI systems become more reliable, humans become statistically less likely to notice when they fail. We are essentially “bored” into missing errors.

Atrophying the “questioning muscle”

We all have a “questioning muscle”. It’s that little voice in our heads that asks, “Is this right?” In an era of delegation, we aren’t using that muscle. We trust the person or thing to which we have delegated to execute the task on our behalf.

When an AI agent handles 100 tasks perfectly, we stop looking at the 101st. We stop being skeptics and start being “rubber stampers.” Research from 2025 suggests that checking AI accuracy is becoming so mentally draining for workers that many simply offload their judgment entirely. If the system says it’s done, we believe it.

The solution: “Point and Call” design

How do we fix this without losing the efficiency of AI? We look to a 100-year-old safety trick from the Japanese railway system: Shisa Kanko, or “Point and Call.”

If you’ve ever seen a Japanese train conductor, you’ve seen them physically point at a signal and call out its status: “Signal is green!” This simple act breaks the brain’s autopilot. It forces a moment of physical and mental engagement.

As we design AI decision-making systems in 2026, we have to build the digital version of “Point and Call.” Instead of just showing a “Task Complete” checkmark, we need to design moments that force a user to look at the logic.

The goal isn’t just to get the result; it’s to keep the human awake enough to catch the 1% failure that matters most.

Designing for AI decision-making systems in 2026: From interfaces to orchestration

For those of us at Standard Beagle, the shift from interaction to delegation isn’t just a change in how we build apps; it’s a change in the “contract” between humans and machines. Our job is no longer to design interfaces that wait for a click. Our job is to design Decision Orchestration.

This shift is being driven by the rapid adoption of AI decision-making systems — tools that don’t just assist users, but interpret intent, make decisions, and execute actions independently. As these systems become more embedded in everyday workflows, the user’s role shifts from active participant to passive reviewer, often without the context needed to evaluate the outcome.

In 2026, the “best” UX is no longer about being the easiest to use. It’s about being the most legible. We are moving from “Feature-Centric” design (here is a button) to “Outcome-Centric” design (here is what you want to achieve).

To keep the “middle” alive, we’ve developed a new field guide for design based on four pillars:

1. Explicit Decision Boundaries

We have to stop treating AI as a “magic box” and start treating it as a “bounded agent.” This means clearly defining where the AI is allowed to act alone and where it must stop and wait.

- The 2026 standard: Think of this as a “Digital Veto.” An agent might be allowed to draft a response or organize a schedule, but it hits a “hard wall” before it hits “Send” or “Purchase.” We design these handoff moments not as roadblocks, but as checkpoints of intent.

2. Legible reasoning (The “Show Your Work” UI)

If a system makes a decision for you, you shouldn’t have to guess why. Moving past the “black box” means designing interfaces that offer a Logic Chain at a glance.

- The 2026 Standard: Instead of just a final price, an insurance agent should show a “Confidence Indicator” — a small, non-intrusive signal that says, “I’m setting this rate based on these three factors.” This transforms the AI from a silent judge into a transparent partner.

3. Dynamic importance scaling

In the old world, every user saw the same menu. In 2026, we use Predictive Hierarchy. The interface actually shifts based on what the system thinks you need to do next.

- The 2026 Standard: If you are in the middle of a high-stakes medical review, the UI should “thicken” around the data that matters most, while irrelevant features recede into the background. It’s a “Visual Whisper” that guides your eye toward the decisions that actually require your human intuition.

4. Uncertainty Signaling

The most dangerous thing an AI can do is be “wrong but confident.” We’ve moved away from 100 percent confidence scores.

- The 2026 Standard: If an AI agent is acting on “thin data” — like a new medical symptom or an unusual financial pattern — it should visually “soften.” We use Ambient Cues, like a subtle pulse or a change in color, to signal that the system is making an educated guess, not stating a fact. This breaks the “autopilot” and invites the human to step back into the middle.

Accessibility as agency: The right to understand

This shift also forces us to rethink Accessibility. For decades, accessibility was about access — can a screen reader see this button? Can a person with limited mobility click it?

But in 2026, AI has become our Cognitive Infrastructure. It’s a layer of our own thinking. Therefore, true accessibility now includes Cognitive Agency.

If a user with a disability is delegating their daily tasks to an AI agent, they aren’t just “using a tool.” They are offloading their judgment. If that system is a black box, the user isn’t just “supported.” They are being steered.

True accessibility in the age of delegation means:

- The Right to Explainability: Can a user with a cognitive impairment understand why the agent just moved their money or cancelled their appointment?

- The Power to Intervene: Is the “emergency brake” as easy to find as the “start” button?

If we don’t design for understanding, we aren’t making things more accessible—we’re just making them more automated. And as we’ve learned this year, automation without agency isn’t progress; it’s a loss of control.

Why this matters to us at Standard Beagle

As Gartner recently predicted, nearly 40 percent of AI agent projects will be cancelled by 2027. Not because the technology failed, but because the trust failed. Users are abandoning systems that feel unpredictable or “bossy.”

Our mission is to build the trust that the technology can’t build on its own. We aren’t just designing for the result; we’re designing for the human in the middle.

Frequently asked questions

What are AI decision-making systems?

AI decision-making systems are systems that don’t just assist users—they interpret intent, make decisions, and often take action on a user’s behalf. These systems are increasingly used in areas like healthcare, hiring, finance, and customer experience.

How are AI decision-making systems changing user experience?

AI decision-making systems are shifting user experience from interaction to delegation. Instead of users exploring options and making decisions step by step, systems now deliver outcomes directly—often without showing how those decisions were made.

What is the “disappearing middle” in AI systems?

The “disappearing middle” refers to the loss of the decision-making phase where users traditionally explored options, compared tradeoffs, and built understanding. As AI decision-making systems take over more of that process, users receive results without seeing how they were produced.

What are the risks of AI decision-making systems?

The biggest risks include:

lack of transparency into how decisions are made

misalignment between system output and user intent

reduced ability for users to intervene or correct errors

over-reliance on automation (automation bias)

How can companies design better AI decision-making systems?

Organizations should focus on:

– making system reasoning visible

– creating clear points for user intervention

– signaling uncertainty when confidence is low

– building feedback loops that allow systems to improve

These principles help ensure AI decision-making systems support users rather than replace their understanding.

Conclusion: Replacing work vs. replacing understanding

The biggest risk of AI isn’t that it replaces human work. We’ve been automating work since the steam engine.

The risk is that it replaces human understanding. When we collapse the “middle” of the experience, we lose the very thing that makes us effective pilots of our own lives: the ability to see the trade-offs, catch the biases, and learn from the process.

As AI decision-making systems become more embedded in our daily lives, preserving that understanding isn’t optional. It’s essential.

Our mission as designers isn’t to build a world where humans never have to think. It’s to build a world where AI handles the execution, but humans always own the intent. We have to save the middle — because that’s where the humanity lives.

Need help designing AI decision-making systems?

As AI decision-making systems take on more responsibility, the biggest challenge isn’t capability. It’s trust. We work with product teams to design systems that:

- make decisions transparent

- keep users in control

- reduce risk from automation

Let’s talk about how your team can design AI decision-making systems that users actually trust.

Sources & Further Reading:

- Geoffrey Fowler’s AI Agent Test: Washington Post, “The $31 Egg Failure” (Oct 2025/Feb 2026).

- PrIME-LLM Study: News-Medical, “Top AI Models Struggle with Clinical Reasoning” (April 2026).

- Mobley v. Workday: Civil Rights Litigation Clearinghouse, Case No. 23-cv-00770 (Updated Feb 2026).

- Brute Luck Research: Law, Innovation and Technology, “AI, Insurance, and Unfair Differentiation” (2025).